The Highly Infrequent Review of Books: Open City, by Teju Cole

5 november 2011 | In Books Self-indulgence | Comments? Teju Cole get’s me. The big obvious differences – I’ve no relation to Nigeria, as far as I know – fades in comparison to the big astonishing similarities – I think like that, walk like that, listen, read, observe like that. And these things, I believe, is what matters here. Of course I do. It’s impossible for me to keep any sort of critical distance to Open City – the effortless elegance of the prose, helps, naturally – I’d blurb it if someone would let me. But the most notable lack of effort is that with which one (or I) submerge into it. There is almost no distance to cover – strumming my not-really-pain with his fingers, typing my life with his words. I would admire him, but modesty (yeah, right) forbids it.

Teju Cole get’s me. The big obvious differences – I’ve no relation to Nigeria, as far as I know – fades in comparison to the big astonishing similarities – I think like that, walk like that, listen, read, observe like that. And these things, I believe, is what matters here. Of course I do. It’s impossible for me to keep any sort of critical distance to Open City – the effortless elegance of the prose, helps, naturally – I’d blurb it if someone would let me. But the most notable lack of effort is that with which one (or I) submerge into it. There is almost no distance to cover – strumming my not-really-pain with his fingers, typing my life with his words. I would admire him, but modesty (yeah, right) forbids it.

This isn’t really a review, is it? I guess not. You may be sick and tired of Big City Novels where Clever but Somehow Aloof Young Man walks about making Clever and Profound Observations. And that’s mainly what ”Open City” is. But that’s only the format. You may be sick and tired of 60- 150 White People playing Notated Music by Dead White Men on Old-fashioned Instruments, too, yet you shouldn’t really rule out Symphonies as something that might be worthwhile, should you?

The Books

16 september 2011 | In academia Books Hate Crime | Comments?

I know, one should not show ones workings but ain’t it pretty? It seems each project I’m sort of in, or propose, or dream about generates a small library. They overlap considerably, which is a good thing considering the limited dimensions of time and space allocated to mortals.

The colour coded library

9 februari 2011 | In Books | Comments?I got friends who organize their book shelf by colour. I’m quite aware that some of you will say ”ex-friends, surely” but no. Intelligent people, equipped with the normal intellectual and aesthetic abilities – and then some – do this, and to the extent that I question their behavior it is because I’m genuinely interested. One of my favorite used-book stores in Lund did colour coded displays every now and then, and it was beautiful. It made for interesting juxtapositions. Which is what you need, in these days when all the books you are likely to come across are those that you where actively looking for, or books some program has determined is suitably similar to what you usually buy.

Sometimes, colour coding happens not as a result of conscious decision, however, but as a consequence of something else. When you are juggling different research interests, for instance (as I do, even if I TRY REALLY HARD to make them overlap), you are likely to organize your library according to topic.

So when I was heavily into happiness research, the books tended to look like this

Now, I’m doing hate crime research, and the books tend to look like this

Yes, I know. You shouldn’t, really, but I bet you would pass a blindfold test.

Wanted

20 januari 2011 | In Books Self-indulgence | Comments?I had the seriously good fortune to find the two other parts of the Sword of Honor trilogy with these rather fabulous cover designs by Bentley, Farrell and Burnett. And now I cannot for the life of me find a copy of the first volume, ”Men at Arms”.

So if you know where one can be found: Tell me. Or buy it yourself and you’ll have amazing leverage in any disagreement we might enter into.

By the way, the volume titled ”Unconditional surrender” was published in the US under the title ”The end of the battle”. Bless them, but really.

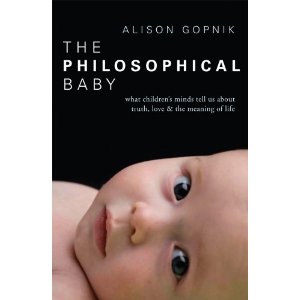

Morality begins

5 januari 2011 | In Books Emotion theory Moral Psychology Naturalism parenting Psychology | 3 CommentsDevelopmental issues in general have, for obvious reasons, been much on my mind lately. It strikes me, as it struck Alison Gopnik thus causing the book the philosophical baby to be written, as strange that the importance of the development of certain capabilities, such as morality, belief-acquisition, language, understanding of objects and other persons, has not been seriously attended to in the theories of those things. Surely, a proper understanding of any domain needs to involve an understanding of how we come to know about it. The cognitive operations that the adult mind is capable of didn’t start out that way, and part of solving the mysteries of cognition is to investigate how it got that way. As Gopnik pointed out in her earlier book the scientist in the crib, babies learn in the way science proceed: by testing hypotheses, revising previous concepts and explanations to fit with the facts, and by thinking up new experiments. We start out with very little, but not nothing, and then we build on that. People generally start out the same – babies everywhere can learn whatever language, but at some point, when we’ve found what sorts of sounds typically occur in communication, we start to interpret, and eventually to ignore small vocal nuances in favor of more effective and more charitable interpretation within the language we thus acquire.

Understanding development is important in itself, and for understanding what it is that thus developed, but it is also important for treatment. If we know how certain capabilities develop, we might understand what happens when they don’t.

But here comes the first kink: scientist disagree about a key feature of development: whether we actually learn ”the hard way”, or whether certain developmental stages, such as understanding that others may have different beliefs from us, just ”kick in” at a certain age. Some knowledge may develop, not like conscious, or even non-conscious, belief-revision, but like facial hair or breasts. Presumably, these things start due to some biological signal, too, but it seems to be a different process from the sort of learning involved in science. It is also possible that the ”signal” in question must appear at a certain window of time. The intense developmental period known as childhood doesn’t last forever. For instance, if you cover the eyes of a cat from birth until a certain time, it wont develop eyesight at all.

These things are even more important in the case of treatment. If I fail to develop certain forms of understanding, such as understanding false beliefs, it is very important whether I can learn to understand it, or whether I need the biological signal. And, of course, whether this biological signal can be provided later on, or if it is too late.

Understanding these features when it comes to morality is clearly of immense interest. How does morality develop? We often hear that children can distinguish between moral and conventional rules at the age of 2 1/2 – 3. But how does this happen? How does one learn the difference? Clearly, we are born with a sense of good and bad (as I’ve argued, this is the capacity to feel pleasure and displeasure, and certain objects and situations that cue these feelings), and with the early stages of social neediness. From this, arguably, morality is created. But how? Is it just the persistent association of the needs/desires/interests of others with hedonic reaction in oneself? Or is it a further developmental stage that is needed?

This is a crucial thing, if we want to understand and do something about immorality. Immorality may, of course, arise in many ways. It may not have been nurtured, so that the right association wasn’t made in the crucial developmental window. But it may also be that the mechanism didn’t kick in, due to some cognitive disorder. And finally, there are cases where the moral reaction is just outnumbered by other interests: morality isn’t all of evaluative motivation. Which of these is the origin of a certain immoral act or immoral person is of immense interest when it comes to treatment, and also when it comes to assigning responsibility.

Stein on copying

16 oktober 2010 | In Books Moral Psychology Psychology Self-indulgence | Comments?

There are many that I know and they know it. They are all

of them repeating and I hear it. I love it and I tell it. I love

it and now I will write it. This is now a history of my love

of it. I hear it and I love it and I write it. They repeat it.

They live it and I see it and I hear it. They live it and I hear

it and I see it and I love it and now and always I will write

it. There are many kinds of men and women and I know

it. They repeat it and I hear it and I love it. This is now a

history of the way they do it. This is now a history of the

way I love it

Gertrude Stein

Just to drive the point home, I copied that quote from the New Yorker book blog, which copied it from Marcus Boons book ”in praise of copying” which is available free of charge here. You really have to copy and paste Stein quotes because, with the possible exception of the really short ones, her sentences are impossible to remember. The style, however, isn’t.

One of my very few poems was a tribute to Gertrude Stein. It’s a rather bad poem, in particular as I’m pretty sure that should be ”contemporary with”, not ”contemporary to”.

Dear Gertrude.

How typical of you

to be contemporary to

so few of your contemporaries

On work and idleness

9 oktober 2010 | In Books Happiness research Hedonism Moral Psychology politics Psychology Self-indulgence TV | 1 Comment

I’m coming to you from (blogging is live, no?) a coffee shop in Gothenburg, where I’m spending this morning preparing next weeks lectures on applied ethics. (First out is animal ethics, which I have to weave together with the ethics of abortion, since we didn’t manage to conclude that subject on friday. Luckily, this is not a hard thing to do.)

It’s a good morning. It’s a very good morning. In fact, I’ve done more work in the past two hours than I did all day yesterday. Which is good for present me, but also a bit annoying for that curmudgeon I was most of yesterday.

What it means is that if I knew how to get to this point of effectiveness, even if it took some time (in fact, if it took less than six hours), it would have been rational to spend the main part of the day doing that, and just work for two hours, rather than working at a much slower rate for eight. It would be rational for another reason to: I’ve found that the way to get to this point is to do things that are nice. Talking to friends and family, reading fiction, taking walks, listening to music or watching television. Good television, I hasten to qualify, because it seems the assigned function of being ”relaxing” is actually not truly attributable to all, or even the majority of, TV-watching. We just think it is, because it make us tired, and then we come to believe that we really needed the relaxation in the first place.

Ideally, of course, I would spend my free time doing the things that make me work like this for the full eight (or so) work hours. But things are not, entirely, ideal. Knowing that, its important to leave your work place occasionally and be idle. Do what you feel like doing, if your conscience and work-ethic will let you. Some companies, famously Google, seem to have grasped this idea and achieve great results for that reason. Of course, this is only true if your work is such that how effectively you can do it depends crucially on your mood and creativity.

Bertrand Russell’s wonderful little essay In praise of idleness is about precisely this. People should have more time to pursue and develop their interests not only because it make them happier – and happiness is, after all, what we want them to achieve – but also because they work better if they’re allowed to do that sort of thing. The worry that the working class would be up to no good if given free time to conspire was based on the fact that as things were, they took to drink, say, or fighting when off work. But in so far that’s true, it’s because they were unhappy, and hadn’t had the time to develop worthwhile pastimes.

Stress is not primarily a consequence of having a lot to do, but a of getting nothing done, or getting less done than you imagine that you should (and having a lot to do may cause that, but need not, and should not. Extremely few of your tasks, I think you’ll find, is done better under stress).

I’ll return to those lectures now. Because I actually really like to.

Moral Babies

8 maj 2010 | In Books Emotion theory Moral Psychology Naturalism parenting Psychology Self-indulgence | Comments?

The last few years have seen a number of different approaches to morality become trendy and arouse media interest. Evolutionary approaches, primatological, cognitive science, neuroscience. Next in line are developmental approaches. How, and when, does morality develop? From what origins can something like morality be construed?

Alison Gopnik devoted a chapter of her ”the philosophical baby” to this topic and called it ”Love and Law: the origins of morality”. And just the other day, Paul Bloom had an article in the New York Times reporting on the admirable and adorable work being done at the infant cognition center at Yale.

Basically, we used to think (under the influence of Piaget/Kohlberg) that babies where amoral, and in need of socialization in order to be proper, moral beings. But work at the lab shows that babies have preferences for kind characters over mean characters quite early, maybe as early as age 6 months, even when the kindness/meanness doesn’t effect the baby personally. The babies observe a scene in which a character (in some cases a puppet, in others, a triangel or square with eyes attached) either helps or hinders another. Afterwards, they are shown both characters, and they tend to choose the helping one. Slightly older babies, around the age of 1, even choose to punish the mean character. Bloom’s article begins:

Not long ago, a team of researchers watched a 1-year-old boy take justice into his own hands. The boy had just seen a puppet show in which one puppet played with a ball while interacting with two other puppets. The center puppet would slide the ball to the puppet on the right, who would pass it back. And the center puppet would slide the ball to the puppet on the left . . . who would run away with it. Then the two puppets on the ends were brought down from the stage and set before the toddler. Each was placed next to a pile of treats. At this point, the toddler was asked to take a treat away from one puppet. Like most children in this situation, the boy took it from the pile of the “naughty” one. But this punishment wasn’t enough — he then leaned over and smacked the puppet in the head.

In a further twist on the scenario, babies (at 8 months) where asked to choose between still other characters who had either rewarded or punished the behavior displayed in the first scenario. In this experiment, the babies tended to go for the ”just” character. This is quite amazing, seeing how the last part of the exchange would have been a punishment (which is something bad happening, though to a deserving agent.) It takes quite extraordinary mental capacities to pick the ”right” alternative in this scenario.

If babies are born amoral, and are socialized into accepting moral standards, something like relativism would arguably be true, at least descriptively. Descriptively, too, relativism often seem to hold: we value different things and a lot of moral disagreement seems to be impossible to solve. In some moral disagreement, we reach rock-bottom, non-inferred moral opinions and the debate can go no further. This is what happens when we ask people for reasons: they come to an end somewhere, and if no commonality is found there, there is nothing less to do.

A common feature of the evolutionary, biological, neurological etc. approaches to morality is that they don’t want to leave it at that. If no commonality is found in what we value, or in the reasons we present for our values, we should look elsewhere, to other forms of explanations. We want to find the common origin of moral judgments, if nothing else in order to diagnose our seemingly relativistic moral world. But possibly, this project can be made ambitious, and claim to found an objective morality on what common origins occurs in those explanations.

If the earlier view on babies is false, if we actually start off with at least some moral views (which might then be modulated by culture to the extent that we seem to have no commonality at all), and these keep at least some of their hold on us, we do seem to have a kind of universal morality.

We start life, not as moral blank slates, but pre-set to the attitude that certain things matter. Some facts and actions are evaluatively marked for us by our emotional reactions, and can be revealed by our earliest preferences. Preferences can be conditioned into almost any kind of state (eventhough some types of objects will always be better at evoking them), so its often hard to find this mutual ground for reconsiliation in adults and that is precisely why it’s such a splendid idea to do this sort of research on babies.

The baby critic

15 april 2010 | In Books Comedy media parenting Psychology Self-indulgence TV | 3 Comments Through the looking glass, okay?

Through the looking glass, okay?

A few months back, to the great amusement of late night talkshows (US) and topical comedy quiz participiants (UK), a group of scientists lodged a complaint against a trend in current cinematic science fiction: It’s not realistic enough. The sciency part of it is not good enough. Science fiction stories should help themselves to only one major transgression against the laws of physics, argued Sidney Perkowitz. To exceed this limit is just lazy story-telling – time travel being a bit like the current french monarch in most Molieré plays. The best works of science fiction follows that almost experimental formulai: change only one parameter and see how the story unravels.

The criticism that started already in the first season of ”Lost” and has become louder ever since was precisely this: the writers clearly have no idea what they’re on about, they haven’t even decided which rules of physics they have altered. The viewer is constantly denied the pleasure of running ahead with the consequences of the changed premise and then watch how the story runs its logical course. Off course, a writer may add surprises, there is pleasure in that to, but you cannot constantly change the rules without adding a rationale for that change. That’s just cheating (or its playing a different game altogether. That is acceptable, of course, I’m not saying it isn’t, I just think this accounts for a lot of the frustration people experience with shows like ”Lost” or ”Heroes”).

The comedians who ridicule the scientist claim that the latter miss the point: Science fiction is suppose to be fiction. But in fact the point is that even fiction, at least good fiction, is not arbitrary.

It struck me that the point made by this group of scientists is very much the reaction that kids have when you break the rules in their pretend play. (There’s an excellent account of this in the opening chapters of Alison Gopniks book ”The philosophical baby”).

One of the interesting things about kids is their ability to, and interest in, pretend play. They are from a very early age able to follow, or to make up, counterfactual stories and imaginary friends and foes, and the stories that play out have a sort of logic. If you spill pretend tea, you leave a mess that needs to be pretend-mopped up. Many psychologists now argue that this is more or less the point of pretend play: you work out what would happen if something, that does in fact not happen, were to happen. The more outlandish the countered fact, the more work you need to put in to draw the right, or sensible, conclusions, and the more adept you become at reasoning, planning and coming up with great ideas. Stories that doesn’t further that project might be nice nevertheless: literature has other functions, after all. But the decline in this particular quality in current science fiction is still a sound basis for criticism. Even a baby can see that.

A unique set of influences

14 april 2010 | In Books parenting Psychology Self-indulgence | Comments?In one of the early notebooks in which I used to put the kind of thought, rants and musing that nowadays makes it into this blogish existence I made some sort of remark about how to overcome the anxiety of influence; the suspicion that all ones work is somehow derivative. ”One can at least aspire” I wrote (or something like that, I obviously didn’t bother to actually find the thing. It’s a notebook, for crying out loud. It doesn’t even have a ”search” function) ”One can at least aspire to be the result of a unique set of influences”. In other words: it doesn’t much matter whether one is little less then the effect of what one has read, seen, heard etc. since the longevity of life in the plastic state makes sure that some originality will ensue even from that process. In addition: to track down the complete set of sources that ”made” a particular author/thinker is excellent fun. One can even toy with that sort of thing in ones writings, provide hints and such (misleading ones, if one wants to be clever).

Anyway, I’m going somewhere with this. Oh, yes: I find that most things I write in hindsight quite clearly is the result of what I was interested in at the time, even when those things were not obviously related to begin with. Thus, for instance, it is highly unlikely that my dissertation would have gone down the way it did, were it not for the fact that I happened to be into cognitive science just before I got the job (much to the dismay of my supervisors). The sort of value theory I was into before that was much more of a dry, conceptual analysis kind of thing.

So I’m pretty sure that something interesting will come from my current preoccupation with the two subjects of Psychopathy and child (infant, actually) psychology. It’s not hard to find a link, obviously: developmental processes are key in both areas, but I’m very likely to make a big point out of this, merely for the reason that these are the things that interests me now.

For instance: one current trend in chid psychology is to stress the wide, undiscriminating attention of infant and toddler (more of a lamp, than a spotlight) which make them better than adults at noticing task-irrelevant features. Psychopaths, according to another book I’m reading, are quite the opposite: one of the cognitive peculiarities of psychopath is their ability to focus, and their inability to remember task-irrelevant features. As pointed out in the previous post, attention may suffer when the amount of information increases, but the reverse is true as well. The inability to shift attention when previously irrelevant information becomes relevant, or shows you that a shift is needed, is clearly a problem in a variable environment, such as our, social one. Infants are in the process of finding out what is relevant, and thus need not to focus attention just yet.

My third current interest is in the cognitive science of literature. I’m likely to find a way to make that relevant to the project as well.

(Currently reading)